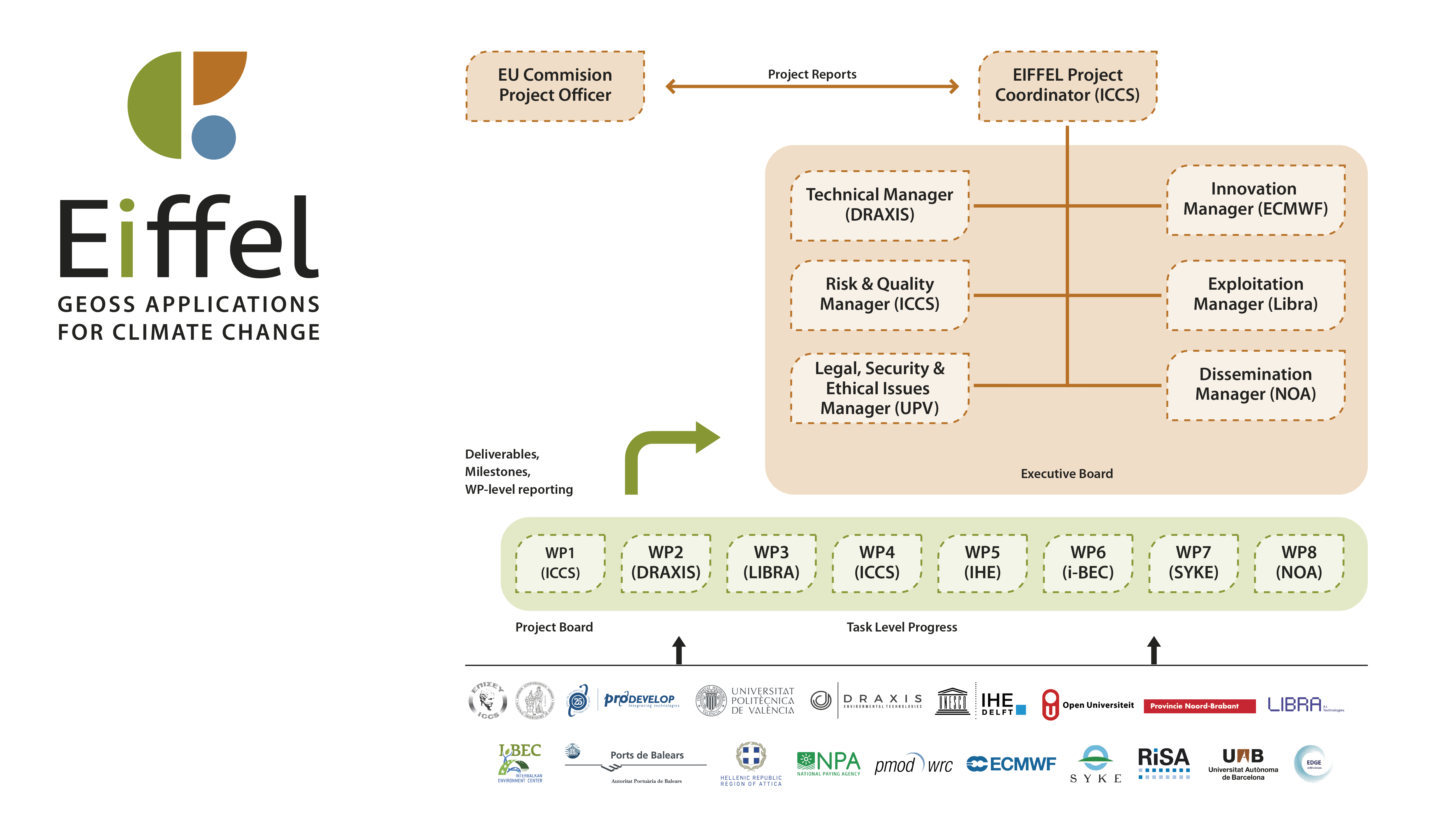

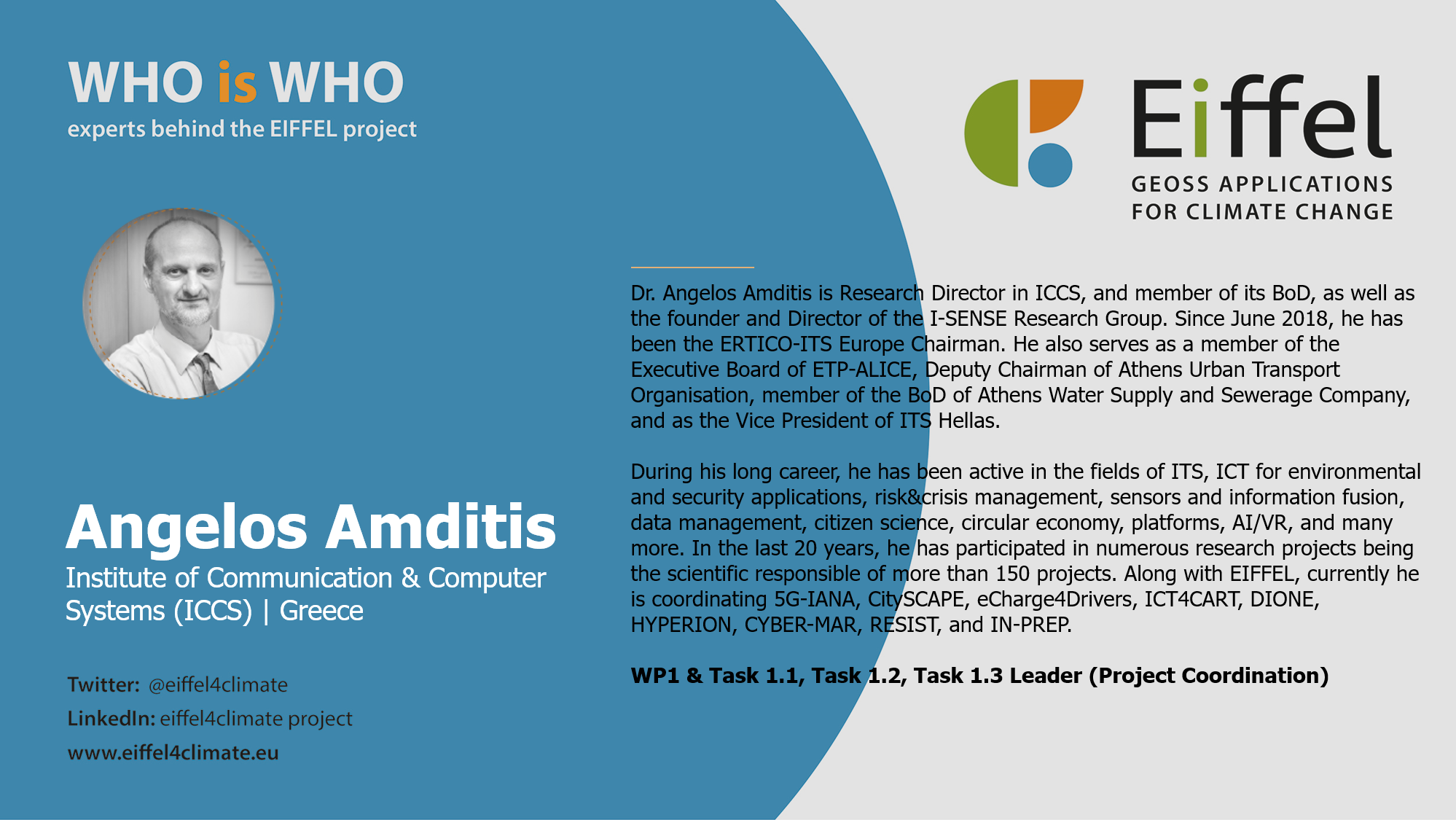

Project Coordination | ICCS

Ensure the achievement of project objectives with high quality and within the predefined time-schedule and budget constraints; Perform continuous monitoring of the project progress, assure the quality and innovation of all project deliverables and outputs.

Handle the procedures for receiving EC financial contribution and distributing it among the consortium; Provide administrative management including signature of the Grant Agreement with the EC, tenure of certificates on financial statements from partners, keeping records on expenditure, distributing EC payments.

Maintain the communication channels among the participants and the EC regarding the regular and updated technical and administrative reporting of the project progress and efforts.

Coordinate the technical activities of the project, ensuring a coordination among technical WP leaders, issues early identification and resolution with respect to implementation.

Define and monitor the project risk and quality plan and procedures.

Compile and consolidate the project data management plan at least in three phases and on-demand when needed; Guarantee that all contractual, legal, ethical, security, society and gender equality issues related to the project research are properly considered and any relevant conventions are respected.

The project coordination will develop management processes and implement them for an efficient and successful project execution, following the PMBOK principles and best practices. The following tasks will be performed:

- Preparation of technical and financial interim reports in a six-month basis (see below) so that deliverables and milestones of each period are closely followed and so that technical activities follow resource consumption.

- Preparation of the external EC reports (intermediate in M18 and final report in M36) according to the EC guidelines, monitoring technical and financial activities as well as audit reports in applicable cases; Distribution of the EC financial contribution and costs coordination and controlling.

- Organisation of consortium meetings (project board, technical).

- Communication with EC and submission of deliverables to the EC.

- Ensuring the communication between partners at all levels and the proper information exchange.

- Support of the evolution of the work plan (time plan consistency, critical tasks highlighted), including in collaboration with T1.2 for technical matters.

WP1

WP2

Co-Design of Climate Change applications based on GEOSS | DRAXIS

Create the personas, user stories and scenarios for the CC applications using co-design principles.

Consolidate user needs into user requirements that are related to both EIFFEL tools and applications.

Prepare, appropriately classify the specifications for the EIFFEL tools and applications and document them in a traceability matrix which will be updated until the end of the project.

Create a document that describes the system architecture, interfaces among tools and applications.

Devise an integration plan early on in the project, which is to be reviewed once the alpha versions of EIFFEL technical components are in place and prior to the integration process starting.

In this task, the EIFFEL user stories and scenarios shall be compiled. The user stories will be inspired by the set of EIFFEL personas, created by the task leader with the respective CoP/pilot leader- and will be crafted in a two-step approach. The first step will be through a co-design process implemented during and after the focus groups taking place until M3. The focus groups shall consist of small groups of participants, being among 6-10 people and corresponding –in terms of profile, expertise and interest- to the stakeholders of each CC application. The focus groups shall include coordinated brainstorming sessions and hands-on sessions for the creation of user stories facilitated by modern tools for such as Trello50 or similar. The use of these tools shall allow for a shared shaping and discussion of a user story, giving the flexibility for off-line improvements and additions after the focus groups conclusion. The process will be facilitated by a focus group moderator, and a backlog of exemplary user stories, circulated to participants prior to the focus groups. In the second step, the user stories shall be further elaborated into high-level scenarios. The user stories and high-level scenarios shall be made publicly available, shared in the project wiki or website. This way, the project outputs shall be made visible from an early stage, triggering CoPs’ and external stakeholders, and allowing for a maximum visibility. When this is completed, this task will collect needs and requirements, based on the scenarios derived; an online survey circulated to the project network of stakeholders will be utilized in order to expand the user base. With the support of the CC applications’ task leaders (IHE, i-BEC, PRO, NOA, SYKE), the needs will be transformed to requirements using a more formal language and taxonomised appropriately (e.g. with unique id, type, category, persona/scenario they relate to, associated EIFFEL tool or application, functional/non-functional etc.). The formulated list of requirements will be properly framed to assist the design of EIFFEL tools and also applications.

Augmenting GEOSS data exploration | LIBRA

Develop the cloud-based front-end component and visualisation engine of the EIFFEL cognitive search tool.

Setup the data handling and management system of real time metadata retrieval and querying to and from the EIFFEL augmented metadata database.

Create the EIFFEL CC-focused ontology.

Design and develop the EIFFEL’s NLP-based cognitive search engine.

Design and develop a diverse toolset for metadata curation, enrichment, augmentation and extension.

Provide to the pilot applications Sentinel data (in raw format but also related products) operationally.

Explore the possibility of making composite search queries for Copernicus data based on conditions of interest.

The visualisation (or UI/UX) engine of EIFFEL data exploration tools will offer map-based search or, equivalently, spatial search based on given coordinates, and a standard set of filtering criteria that legacy engines already provide, e.g. dataset year/timespan, data provider, format, scale, resolution. Beyond this, an important functionality is that the engine will dynamically show an advanced tree-view checkbox filtering structure, mobilized by both the EIFFEL ontology structure and the concept relations and keywords revealed via NLP. The engine will prioritise the returned results according to their ‘relevance percentage’. More importantly, a list of the most frequent similar searches performed by other EIFFEL cognitive data exploration users shall be presented, as well as a user-friendly and navigable list of relevant -external to GEOSS- datasets, that shall be returned from the T3.4. The tool explores the information without knowing the structure of datasets, taking advantage of the semantic relation among the defined terms. Technically speaking, the UI/UX will be implemented as a web-based tool permitting interoperability among digital systems and universal use, using a common data format (most probably JSON-LD). Moreover, the back-end of the engine will be cloud-based, supporting data storage, querying and retrieving. This will support full text search capabilities for metadata search as well as cosine similarity based direct search for vector matching in cognitive search querying in line with T3.3 and T3.4 requirements.

WP3

WP4

Improving temporal, spatial resolution & data quality of CC related datasets | ICCS

Improve the quality and completeness of time series datasets by providing a stochastic toolbox facilitating temporal data augmentation tasks for GEOSS datasets.

Collect the in-situ data of local and/or regional nature that are relevant for each CC application.

Implement a novel processing cycle, capitalising on ML/super resolution and data fusion techniques of Sentinel data with the aforementioned in-situ data, aiming to enhance the spatial resolution of CC datasets.

Ensure quality assessment of GEOSS data with particular emphasis on CC applications while also incorporating users’ perspective in the metadata of the resources in a standardized way.

In this task, we will design and develop theoretically justified methods and tools based on statistical and probabilistic notions such as those of, time series analysis, stochastic processes, and copulas to address three key challenges of CC-related datasets: 1) lower-scale extrapolation (e.g., temporal downscaling), 2) infilling of time series missing values, and 3) generation of statistically consistent stochastic realizations. The solutions delivered within this task will build upon the concept of Nataf’s joint distribution, a notion closely related to copulas, that has been recently and successfully used to address problems related to the stochastic simulation of non-Gaussian random variables, processes and fields. Here, we exploit the flexibility and generality of this concept, as well its expandability, to remedy the above three challenges, delivering high practical value. The developed methods and tools will be applied to both physical processes such as, meteorological ones, e.g., precipitation, temperature (data required almost by all pilots - e.g., P2, P3, P5), wind direction, relative humidity (P3), surface solar radiation (P4), surface soil moisture (P5), as well as to non-physical processes, such as emissions indices (P2) and air quality indices (P3). The power and utility of the tools will be demonstrated through proof-of-concept applications in several cases (see above), while the final output will be a general-purpose and easy-to-use, validated, toolbox for engineers and researchers working with time series data (e.g., meteorological ones) – substantially simplifying laborious tasks related to data (pre-) processing.

Development of the EIFFEL Climate Change applications based on GEOSS | IHE

Implement a DSS for assessing the impact of CC adaptation measures on the water and soil carbon conditions in the Aa river basin in NL.

Estimate carbon stock changes in agriculture, that may be used by farmers to manage microclimate effects, and also by the Lithuanian paying agency for monitoring NDCs, as per the PA-set targets.

Implement an analytical tool and DSS to assess the climate impact of the activity of the Balearic Port Authority, monitoring pollution episodes, correlating them with vessel routes and schedules and assist decision makers optimise vessel traffic so as to allow a lower port environmental footprint.

Implement a DSS to assess the impact of urban GHG mitigation scenarios, with respect to buildings energy efficiency, photovoltaic penetration and electromobility adoption in the Attica region.

Develop a risk assessment and decision support framework for droughts, forest fires and forest pests in Finland.

For all the applications, datasets from GEOSS-centred EIFFEL tools for exploration and augmentation (WP3, WP4) will be obtained, in an incremental way (alpha version M14, final version M18), allowing for a development of the WP5 applications in iterative development cycles.

This task will focus on developing the modelling and DSS for assessing the impact of adaptation measures on the water and soil carbon conditions in the Aa river basin in the Netherlands. The hydrological/water management models will be developed in a detailed scale for testing measures on (groups) of individual properties/fields, while the soil carbon model will be on a major land use changes. ML emulators of these models will be set up, in close collaboration with T6.1, as needed for the DS components that will be used by identified stakeholders. Separate models will be developed for the Belgian part of the Aa river basin, using GEOSS data to assess the potential of such applications in cross-boundary river basins. Detailed requirements for the models will be obtained from the work of WP2 (especially Tasks 2.1, 2.2). The DSS will be web-based that will allow presentation and testing of the adaptation measures by different stakeholders in a user-friendly way.

WP5

WP6

Interpretability, integration & upscaling of EIFFEL Climate Change applications | i-BEC

Ensure a seamless collaboration predominantly with WP5 tasks, for rendering the developed CC applications interpretable and, thus, transparent and credible.

Identify and demonstrate capabilities of Explainable Artificial Intelligence (XAI) components for CC applications working with GEOSS data and implemented in the EIFFEL pilots.

Update, elaborate and execute the integration plan developed in T2.3, so that the WP7 pilots’ activities may commence at the defined time instances.

Ensure interaction and appropriate interfacing of WP5 DSS applications with WP3/WP4 developments (for the final version of the latter tools), in order to enable appropriate pilot testing and an efficient future creation of other CC applications by third parties; this shall be followed by incremental integration principles.

Demonstrate the potential for upscaling EIFFEL applications to a pan-European level, and for replication to other European pilots, in close interaction with Pilots 1 and 5.

This horizontal task will help to add interpretability capacity in the data-driven models (ML, DL) and applications of WP5 and will enable the CC applications to attain the merits of eXplainable AI (XAI), as detailed in Section 1.3.1.1.3, including understandability and transferability. This task will collaborate with T5.1-5.5 to identify where XAI would be of interest for the researchers and stakeholders. The specific activities are: 1) Assist the WP5 applications in the design and conceptualization phase of the ML based applications, by e.g. helping determine the best methodology/workflow for the task at hand; 2) Apply an interpretability analysis on the derived models to understand their inner workings and identify whether the model has identified a causal connection between input and output; 3) Where applicable, perform robustness analysis and what-if scenarios to ascertain their transferability and test how these models would perform under different scenarios (i.e. different inputs). 4) Where applicable and feasible, develop visual representations (e.g. knowledge graphs, trend analysis) of the interpretations derived by the models, depicting the knowledge captured in a comprehensible way to end-users and researchers.

EIFFEL Pilot demonstrations & impact assessment | SYKE

Listing and elaboration of evaluation KPIs for technical, operational, environmental and societal impact assessment of the pilot demonstrations; perform and report the assessment as per those KPIs.

Prepare and execute the pilot demonstrations in each of the selected GEO SBAs;

Improve CC application developments based on real world testing and implementation as well as stakeholders’ feedback.

Identify benefits and gaps of using GEOSS data in Climate Change applications.

Assess environmental impact and contributions to policy goals (PA, SDGs, SFDRR) and recommendations and guidance for best practice.

This task will coordinate the assessment of pilot applications including the stakeholder engagement (together with T8.2). The results of the intermediate and final assessments of pilot demonstrations will be captured in a structured way and jointly interpreted against objectives (KPIs, starting from Table 1 and elaborated together with pilot partners and CoPs stakeholders within this task) and user needs (T2.1). The contributions to policy goals and recommendations from pilots, including operational aspects, benefits and gaps for the integration of GEOSS data and exploration tools, best practices and lessons learned (T7.2-7.6) from national or sub-national perspective, will be compiled and synthesized in a systematic way.

WP7

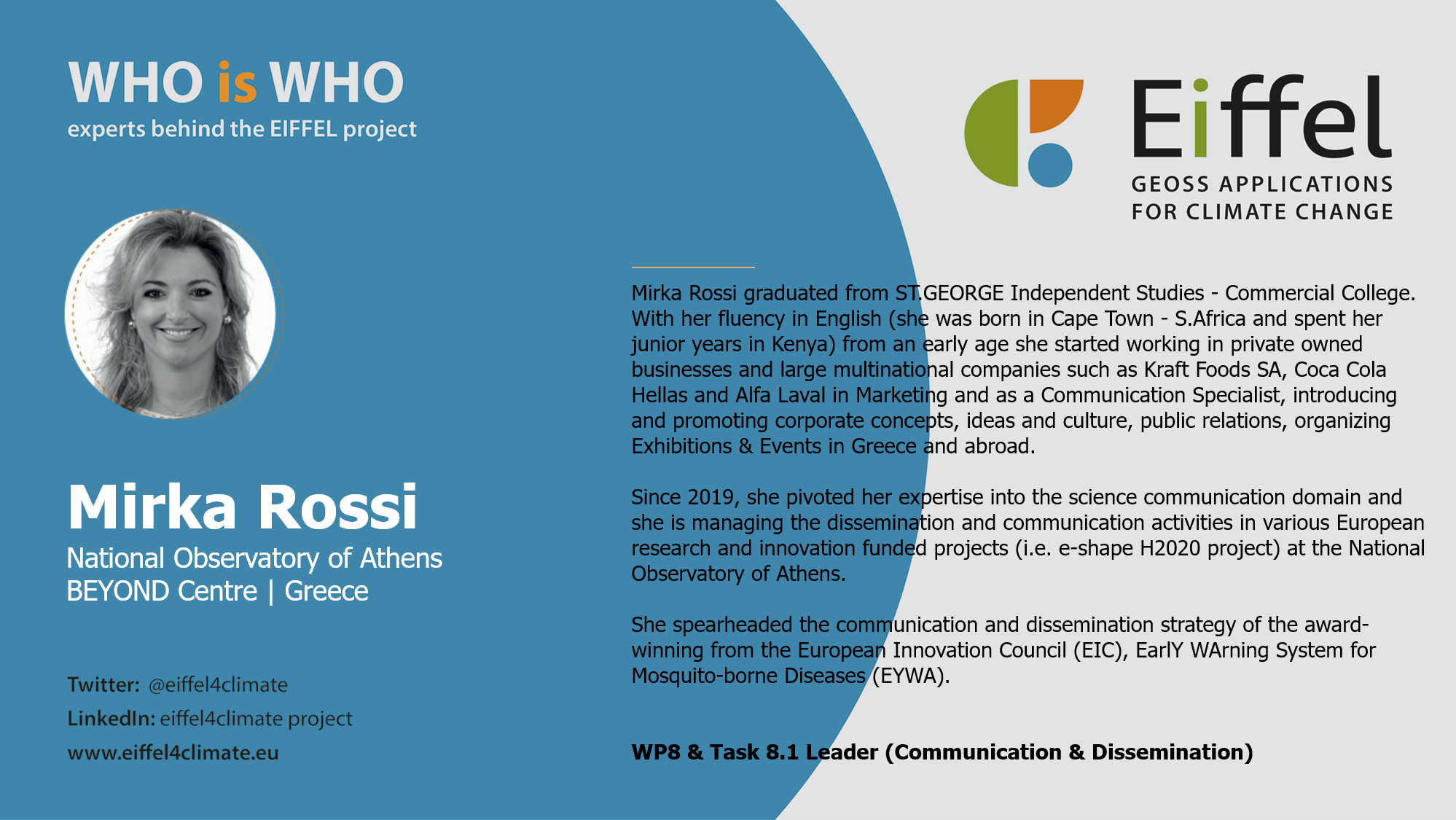

WP8

Impact creation & EIFFEL sustainability | NOA

The overall aim of WP8 is to maximise project impact through an effective campaign of communication, dissemination and engagement activities. This will be achieved by: (i) raising awareness of and encouraging engagement with the project for targeted audiences and (ii) disseminating project results.

This task will increase uptake by raising awareness on the solutions developed through tailored and well-targeted communication, dissemination, outreach activities, synergies with other projects allowing EIFFEL to be known in GEO, EO communities and user groups.

Management